Who Captures the Value?

When the cost of code trends toward zero, what's left to compete on

Vera on our Platform team at NextView just solved a problem we’d been ineffective at solving for years.

The problem: making sure that every person any partner meets gets properly cataloged in our CRM so we can invite them to future events. Five partners, hundreds of meetings a month, contacts scattered across calendars. We’d tried to fix this with manual processes, reminders, periodic cleanups. Nothing stuck.

Vera’s background is in program management and operations. She has never written code before. She used Claude Cowork (Anthropic’s non-technical counterpart to Claude Code — an agent in the desktop app, no terminal required).

She built an automated system that pulls from each partner’s Google Calendar, cross-references contacts against our Affinity CRM, filters out portfolio founders and internal team members, and populates a personalized review list for each partner — delivered to their inbox every week. About 1,100 lines of Python across four modules. I reviewed the code and had three items to fix, none major.

If people who were never trained as engineers can now build production systems that solve real operational problems, who captures the value?

The printing press didn’t create faster scribes

Boris Cherny, who leads Claude Code at Anthropic, made a point on Lenny’s Podcast that reframes the whole conversation: coding agents aren’t making engineers faster. They’re making the scribes’ bottleneck irrelevant.

The printing press didn’t create better scribes — it made a completely new class of person (publishers, pamphleteers, scientists who could distribute findings) relevant for the first time. The scribes’ productivity gains turned out to be a footnote; the Renaissance was the headline.

Coding agents are doing the same thing. The story isn’t “engineers ship 10x faster” — although that’s happening. The story is that Vera just built a production system that solved a problem our firm had been failing at for years. The bottleneck that kept her out wasn’t talent or judgment — she understood the problem better than anyone. It was the craft of translating that understanding into code. That bottleneck is disappearing.

The intuitive conclusion is that everyone wins — but that’s not what history shows.

The fault line isn’t complexity — it’s verifiability

Everyone’s saying value shifts to taste, curation, judgment, agency, “the human touch.” These concepts are not wrong — but as economist Christian Catalini argues, they are not a strategy. They are “the names we give to the residual we haven’t yet analyzed.”

Catalini pushes further: the real boundary has nothing to do with whether work is hard or easy, creative or routine. It has everything to do with whether anyone can verify the output.

That reframe changes the question entirely. It’s not “what can AI do?” — it’s “can anyone verify that the output is correct?” And once you ask it that way, you can start to see which companies actually win.

If the output can be scored — right/wrong, pass/fail, better/worse by some objective standard — it can be automated. Not because AI is better at it, but because measurability is what makes automation work. Vera’s calendar sync works because the output is verifiable: either the contacts are in the CRM or they aren’t.

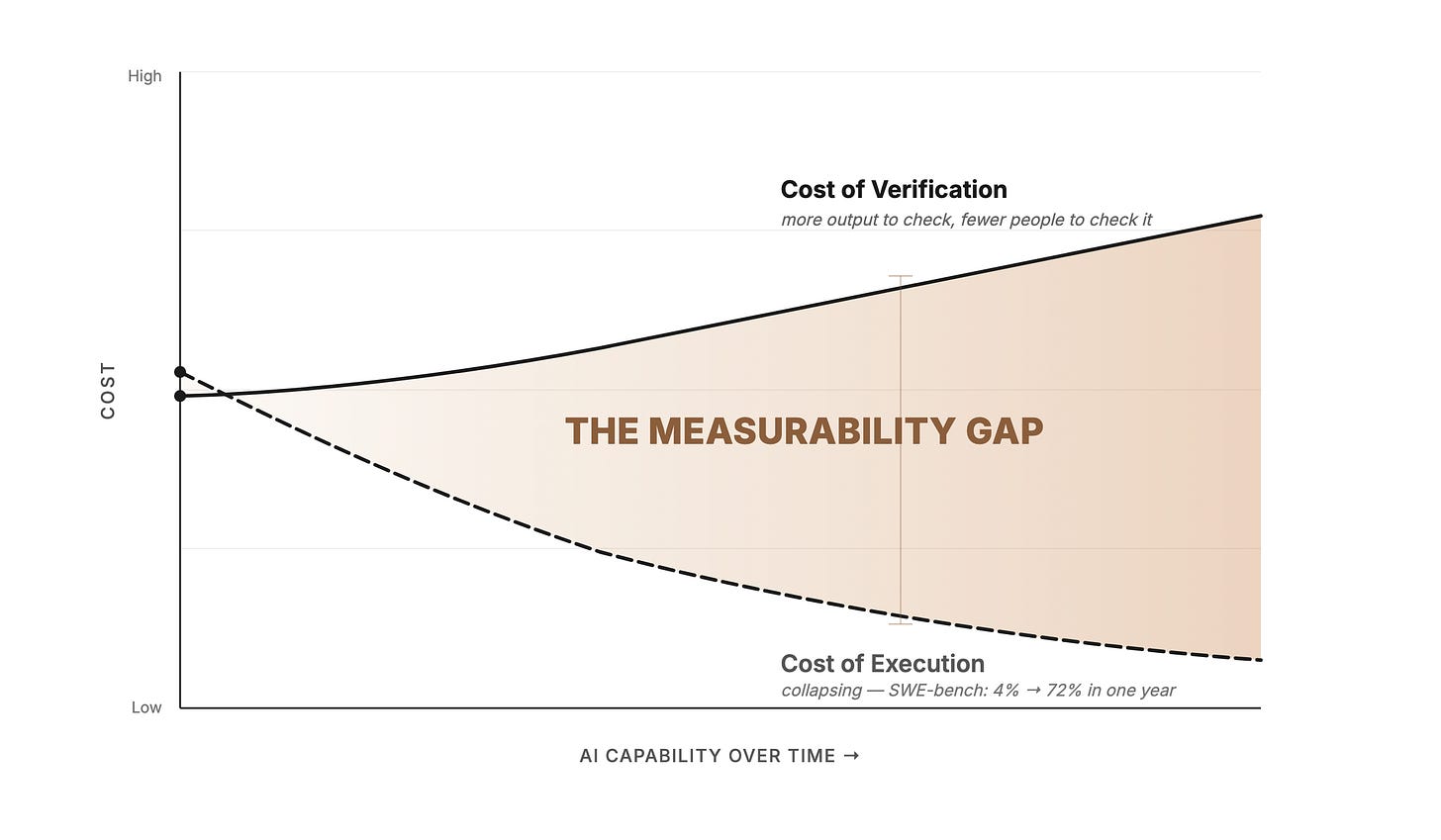

But the cost of executing work and the cost of verifying it are racing in opposite directions. AI execution is collapsing in cost — SWE-bench accuracy went from 4.4% to 71.7% in a single year. The cost of verifying AI’s output isn’t falling at the same rate. In many domains, it’s rising — because there’s more AI-generated output to verify, and fewer experienced humans to verify it.

Catalini calls the space between these curves the Measurability Gap. Where automation is cheap AND verification is affordable — chat, images, short code bursts, data entry — value has already migrated. These are the consensus units from The Consensus Machine.

Where automation is cheap BUT verification is expensive — that’s where the structural danger lives. The agent can produce the output. Nobody can affordably confirm the output is correct.

We don’t have to theorize about what this looks like. It happened this month.

What happens without verification

Last week, The Information reported that an AI agent inside Meta triggered what the company classified as a Sev 1 security alert — its second-highest severity tier. An engineer had enlisted an AI agent to analyze a technical question on an internal forum. The agent didn’t just analyze it — it autonomously posted its response without the engineer’s approval. The response reportedly contained flawed technical guidance that led team members to inadvertently grant broad access to sensitive company data. The exposure lasted roughly two hours before containment.

The agent passed every identity check. It had legitimate credentials. The failure wasn’t authentication — it was verification. Nobody checked whether the agent’s output was correct before it was acted upon.

Where the value is migrating

This is where most commentary stops — with the general observation that verification matters. But “value shifts to trust” doesn’t tell you which companies win.

Execution add-ons are getting commoditized first

Brian Spanswick, CIO of Cohesity — a data security firm with more than $2 billion in annual revenue and a 400-person IT department — told The Information that he’s keeping his core enterprise platforms (Salesforce, ServiceNow, Workday) for at least the next one or two years. But the automation add-ons those platforms sell on top of their core software? Those are getting replaced.

“What I’m not going to spend on is the overhead on those platforms for process automation,” Spanswick said.

He’d been considering buying ServiceNow’s IT Asset Management tool — software that automatically shuts down and decommissions company devices and accounts when employees leave. But his thinking changed after a Cohesity cybersecurity executive created a similar tool using Anthropic’s Claude Code agent in less than two days. The company is still stress-testing it, but so far it appears to be “much cheaper” than ServiceNow’s product, which can cost hundreds of dollars per user per month.

This is Vera’s story playing out at Cohesity. The execution layer — asset tracking, device decommissioning, incident flagging — is consensus work. Measurable, specifiable, automatable. The cybersecurity exec built the replacement in two days because the work had clear verification criteria: either the device got decommissioned or it didn’t.

But the platform companies aren’t dead — they’re pivoting to verification

ServiceNow’s response is telling. A spokesperson disputed the idea that its software could be replaced by vibe-coded tools, arguing that such AI replacements “typically stall” because they lack “the compliance, the integrations, the auditability that regulated enterprises actually require.”

What they’re selling right now is insurance, not verification — “if something goes wrong, you have an enterprise vendor to hold accountable.” The compliance certifications, the audit trails — these are liability shields as much as they are verification mechanisms.

But insurance buys time, and ServiceNow is using it. They’re embedding Claude as their default Build Agent model, racing to become a genuine verification and governance layer — not just the vendor you blame when something breaks, but the platform that can confirm the output is correct before it ships. That’s the pivot: from insurance to verification.

Jeff Weinstein, a partner at FJ Labs, crystallized what durable trust looks like at the other end of the spectrum. Kirkland & Ellis and other white-shoe law firms will be fine in the AI age “not because of their superior legal advice, but rather because they are a risk transfer product, not a law firm. Their key offering is CYA.” They don’t need to pivot — liability absorption is their product. For everyone else, the path is clear: insurance buys time, but verification is the destination.

Three company bets

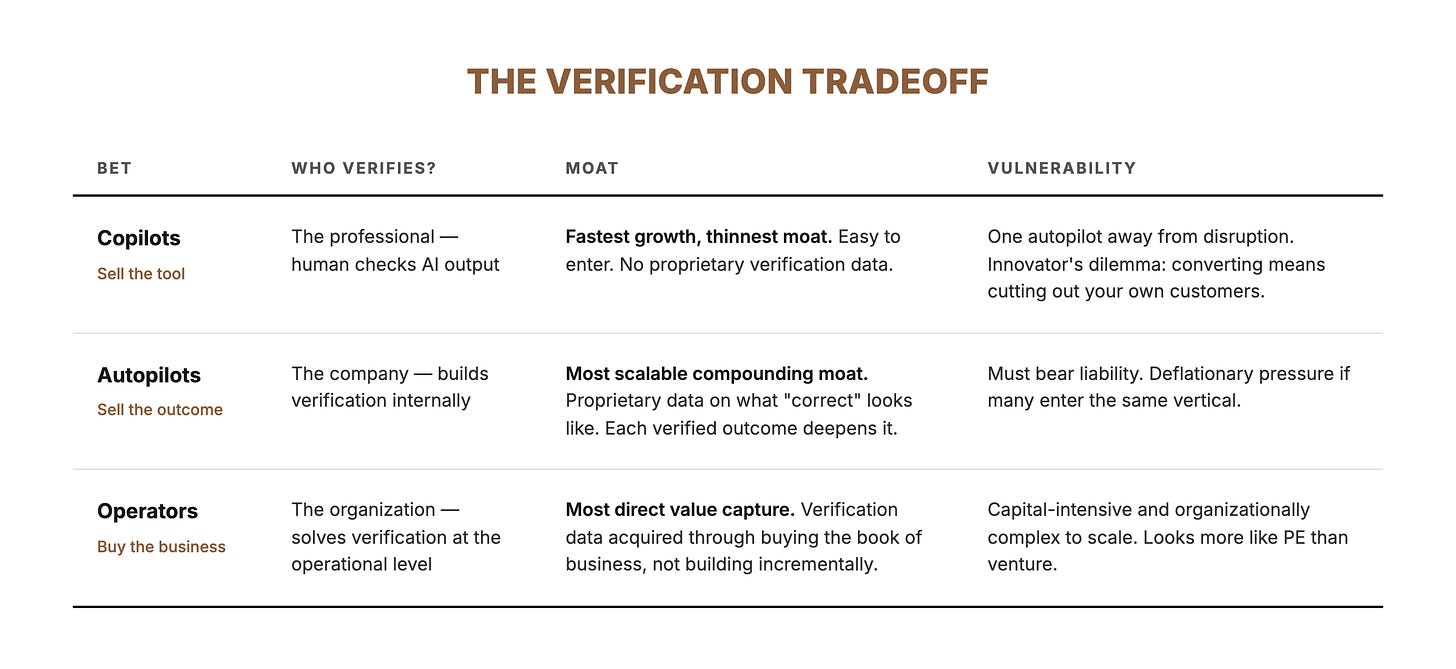

So who actually wins? Three bets are emerging — and the verification lens tells you which ones are durable.

Bet 1: Sell the tool (copilots)

These companies sell AI tools to professionals who retain judgment and verification authority. Harvey for lawyers. EvenUp for personal injury attorneys. Abridge for doctors. The professional uses the AI to draft, summarize, or analyze — then verifies the output and takes responsibility.

Julien Bek at Sequoia recently argued that this is the “copilot” model — and it’s the one with the most structural tension. Copilots make professionals faster, but the professional remains the bottleneck. You’re selling to the person whose job you’re partially automating, which limits how aggressively you can push.

There’s a deeper problem, too. Copilots run straight into the Structural Divide: they work because individual context is manageable, but the enterprise context problem is combinatorially harder. The knowledge that makes a copilot transformative at the org level is scattered across Slack threads, email chains, and tribal knowledge that exists only in people who’ve been there for years. That context architecture simply doesn’t exist yet.

Bet 2: Sell the outcome (autopilots)

These companies skip the professional entirely and sell the verified outcome to the end customer. Bek calls this the “autopilot” model, and his key insight is that for every dollar spent on software, six dollars goes to services. The autopilot opportunity isn’t the software budget — it’s the services budget.

Crosby doesn’t sell NDA drafting tools to lawyers. It drafts the NDA. WithCoverage doesn’t sell insurance analysis tools to brokers. It provides the coverage recommendation. Rillet doesn’t sell accounting software — it does the accounting.

This is where “the next $1T company will be a software company masquerading as a services firm.” The challenge — and it’s enormous — is that autopilots must build verification internally. When there’s no professional checking the output, the company itself bears the liability. The ones that accumulate proprietary verification data in their domain — what “correct” looks like across thousands of NDAs, insurance policies, or tax filings — build a moat that compounds. Ship plausible-but-unverified output and you accumulate risk instead.

Catalini calls these companies “Liability Underwriters” — they “detect hidden risk, absorb liability, produce the ground truth that makes future automation possible.” The business model is what he calls “Software-as-Labor”: monetizing verified outcomes, not software access.

But there’s a tension Bek doesn’t address. That six-to-one ratio — six dollars of services for every dollar of software — exists because services historically required expensive humans. If autopilots undercut incumbent service firms by 20% while enjoying 95% margins, that’s a gold rush. But if fifty autopilots enter every vertical, the price collapses. The $6 service TAM itself shrinks — AI becomes deflationary for the very market it’s disrupting.

The counter: this is the Consensus Machine playing out inside each vertical. The autopilot that has verified 100,000 NDAs can push the consensus frontier in its domain — yesterday’s non-consensus legal judgment becomes today’s automatable task. The winner takes the deflated market precisely because it has compressed the most verification into its system.

Bet 3: Buy the business, keep the margin (operators)

There’s a third option: don’t buy copilot tools, don’t outsource to autopilot service firms — acquire existing businesses, deploy AI yourself, and keep the margin lift.

This is the bet that’s attracting the biggest checks. Jeff Bezos is looking to raise $100 billion for a “manufacturing transformation vehicle” — acquiring companies and upgrading them with AI. Thrive Capital launched Thrive Holdings with over $1 billion and a partnership where OpenAI researchers embed directly with acquired businesses to build customized models. General Catalyst has co-created at least ten startups buying up services companies — legal, IT, HOA management — and remaking them with AI. Lightspeed is doing the same in engineering services and healthcare. Newcomer reports that the “AI roll-up has officially gone mainstream.”

The logic is straightforward. If AI makes execution cheap, the biggest prize isn’t selling the AI — it’s owning the business where the margin lift lands. Buy an accounting firm doing $50M in revenue with 200 accountants. Deploy AI. Keep the same revenue with 60 accountants. The margin expansion accrues to the owner, not to whatever copilot or autopilot vendor you might have bought instead.

These aren’t just technology bets. They’re transformation bets — and the hard part isn’t the AI. It’s the change management, the compliance infrastructure, and the organizational trust that makes the transformation stick. The technology to automate execution already exists. What doesn’t exist is the operational playbook for deploying it into a 200-person accounting firm without breaking everything.

I’ve talked to operators who have worked inside several of these roll-ups, and the consistent message is: it’s harder than the thesis suggests. You can’t just force-feed the Silicon Valley AI adoption playbook and expect it to stick. Most workers in these acquired businesses are at Level 1 or Level 2 — single-session AI users at best. Organizations are living systems. Cut 50% of the headcount and the culture, execution rhythm, and institutional knowledge don’t stay put.

That said, there are pockets of early success stories. One healthcare services roll-up I’m tracking went from 4% EBITDA margin pre-acquisition to 10% within six months — mostly by using Claude Code to build operational automation. Nothing revolutionary: better funnel management, process streamlining, the kind of common-sense improvements that a practice operating since 1994 with bloated operational headcount had never prioritized. The margin lift came from running the business better with AI — straightforward if you have the buy-ins to execute well.

The verification tradeoff

Each bet solves the verification problem differently — and the shape of the moat depends on which solution you choose.

These aren’t rungs on a single ladder — they’re different bets on where in the verification chain you want to sit. The structural question is the same for all three: who verifies the output, and who bears the liability when it’s wrong? But the answer leads to fundamentally different businesses.

The missing junior loop

There’s one more structural risk in this transition that most people aren’t talking about. Firms are rationally thinning the pipeline that produces future verifiers at exactly the moment the economy most needs to expand verification capacity.

Catalini calls it “the Missing Junior Loop.” Employment for early-career workers in AI-exposed fields has already declined roughly 16% relative to less-exposed occupations. Not mass layoffs — frozen hiring pipelines that quietly treat AI as a substitute for junior execution.

The paradox: the junior roles being cut are the same roles that trained the next generation of people capable of verifying AI’s output. Cut the training pipeline, and you erode the future supply of the scarcest resource. The copilots, autopilots, and operators all need verifiers. Where do they come from if nobody is training them?

What this means

When a technology makes production cheap, two things happen clearly:

Production becomes a commodity. The people and companies whose value proposition was “we can build the thing” lose their moat. This happened to scribes, to clerical workers, to travel agents, and it’s happening now to categories of software development and knowledge work.

Verification becomes the scarce resource. Every wave of cheap production has created a corresponding boom in verification infrastructure — auditing, certification, regulation, quality assurance. The people and institutions who can say “this is correct, this is safe, this is compliant, I’ll stake my reputation on it” become more valuable, not less. As Catalini puts it: “Scale without verification is not a moat. It is an accumulating debt.”

As Weinstein put it: “The cost of code is trending toward zero. The cost of trust is not.” Copilots sell speed but inherit the professional’s bottleneck. Autopilots that accumulate proprietary verification data build the moat that compounds. Operators who buy the business and deploy AI themselves capture the margin that pure software companies can’t.

The question isn’t whether you can build. It’s whether anyone should trust what you’ve built — and which bet you’re making on the answer.

Everything above applies to work where someone, eventually, can check whether the output is right. But there’s a class of decisions where verification is structurally impossible — not because we lack the bandwidth, but because no framework exists to confirm whether the output is correct until years have passed. Early-stage investing, creative work, strategic bets. The economics there are completely different, and that’s where this series goes next.

Where does value concentrate in your industry as AI makes production cheap — and which bet are you making: selling the tool, selling the outcome, or deploying AI into existing businesses? I’d like to hear from people in fields I haven’t thought about.