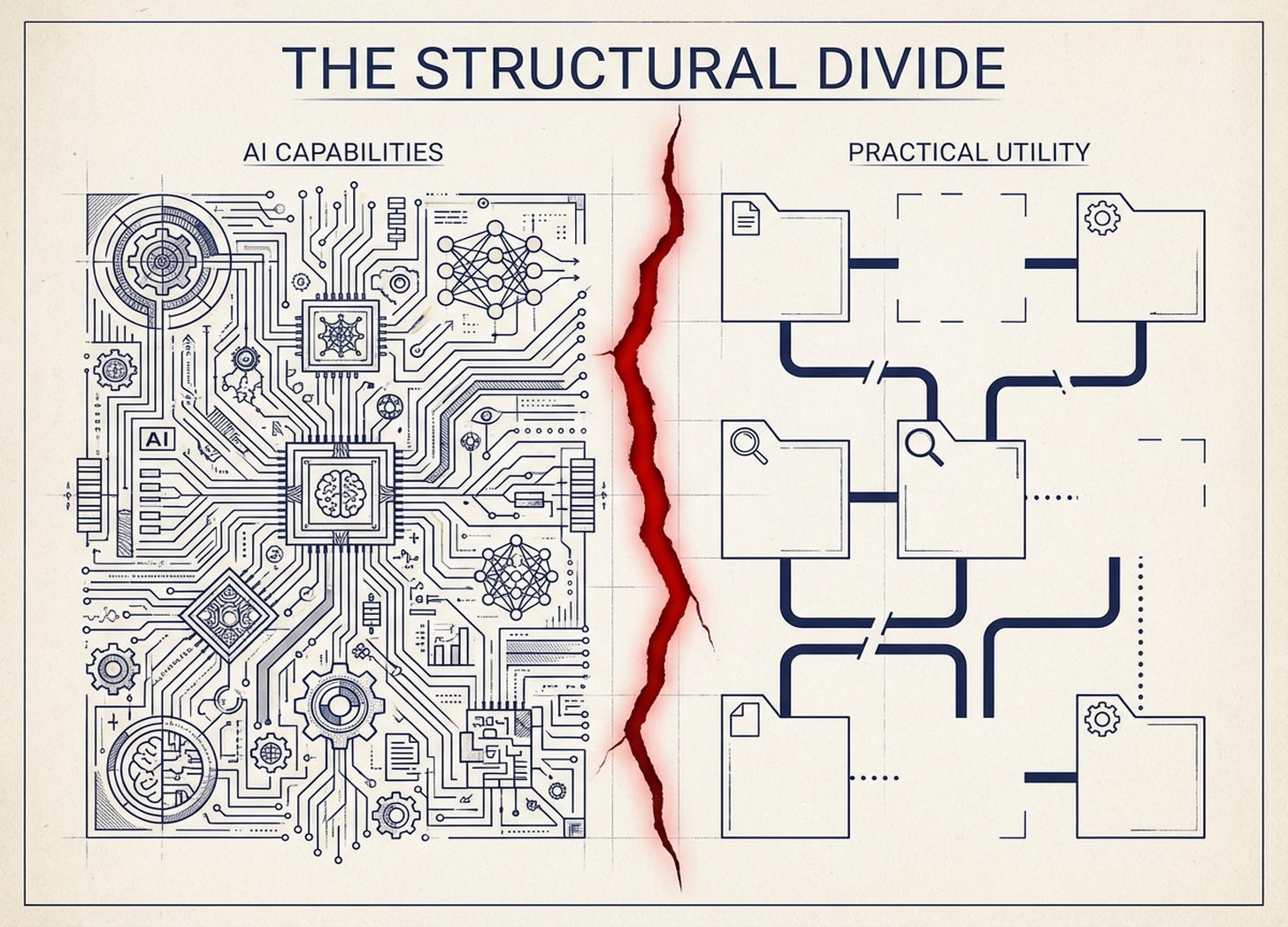

The Structural Divide

Why Enterprise AI Productivity Lags Individual Breakthroughs

There’s a strange disconnect in the AI productivity conversation.

On one hand: MIT’s “GenAI Divide” report created a stir last summer when it showed that 95% of enterprise AI pilots deliver no measurable P&L impact. On the other: Lenny Rachitsky’s survey of product managers, engineers, designers, and founders found 55% say AI has exceeded their expectations. Nearly 70% report improved work quality. Founders specifically? 49% are saving over 6 hours per week.

Both narratives are true. And I think the gap between them is structural, not behavioral.

The individual productivity flywheel is real

When I work with Claude Code, my context is contained. Everything lives in local files, markdown docs, a filesystem I control. The relevant information exists in one place. I can dump my professional history, my preferences, my constraints into structured text — and now every interaction builds on that foundation.

This works because the surface area is manageable — though even building personal context infrastructure took months of iteration to get right. Context scales roughly linearly with effort. At enterprise scale, it scales combinatorially.

The enterprise context problem is combinatorially harder

xAI’s “human emulators” project reveals what enterprise AI automation actually requires. They’re building AI systems — codenamed “Macrohard” — that simulate human employees by navigating keyboards, reading screens, and completing tasks across fragmented systems. These AI “employees” are already deployed internally at xAI.

The telling detail: the emulators sometimes cause confusion due to hallucinations. In one case, an AI asked a coworker to “come talk in person” — only for that person to arrive at an empty desk. The ambition is one million human emulators, potentially running on idle Tesla vehicles as distributed compute. The reason they need emulators — not just APIs — is instructive: enterprise context doesn’t live in one place. It lives in Slack, Google Docs, email threads, spreadsheets buried in attachments, meeting recordings no one watches, and tribal knowledge that exists only in people’s heads.

Replicating individual Claude Code magic at org level isn’t 10x harder. It’s exponentially harder.

Four structural observations

1. Context is the bottleneck, not capability

AI capability exists. Most enterprises have access to frontier models. The gap isn’t the AI — it’s coherent context. When you work solo, you are the context. You know what you meant, what you tried before, what constraints matter. At org scale, that context is scattered across people, systems, and historical decisions that live nowhere except someone’s memory.

The MIT study confirms this: “Most GenAI systems do not retain feedback, adapt to context, or improve over time.” The failure isn’t models or infrastructure — it’s the learning gap.

2. Local-first works because scope is contained

It’s not an accident that Anthropic built Claude Code around markdown files and local filesystems. That design choice reveals something: agentic AI works best when context has boundaries. The moment you try to extend it across team members, tools, and processes, you’re not building a feature — you’re building infrastructure.

3. Behavioral explanations mask structural constraints

When someone says “enterprises are slow to adopt AI,” the implication is that it’s a people problem — training, culture, incentives. But what if it’s an architecture problem? What if the enterprise context layer simply doesn’t exist, and no amount of adoption push will change that?

The MIT data supports this: 80% of organizations have piloted ChatGPT or Copilot. Adoption isn’t the problem. Transformation is — and transformation requires infrastructure that doesn’t exist yet.

4. Most context infrastructure focuses on the wrong problem

There are two types of enterprise context: (1) tacit knowledge that lives in people’s heads — judgment, heuristics, the “how we actually think about this” — and (2) existing work products like documents, past decisions, and historical examples.

Most tools focus on extracting from #2 because it’s visible and feels scalable. But derived guidance requires massive labeling to get right, and in longitudinal domains — where outcomes take years to reveal — historical data may be less valuable than intuition suggests.

When testing the concept of an AI-assisted deal evaluation system at NextView, the historical approach seemed obvious: organize a decade of 100+ investment memos, label outcomes, train on patterns. The proof-of-concept showed promises, but going down this path means labeling thousands of deals over a long longitudinal arch — where outcomes take years to materialize — felt like poor ROI . Would deals from 2015 teach us how to evaluate founders in 2026?

Instead, we started with directly-articulated judgment: prompts capturing how senior investors actually evaluate founders, market dynamics, and founder-market fit today. That approach worked — the system now produces decisions requiring correction less than 10% of the time. The historical approach might still have value to further close the performance gap, but the direct path got us to useful faster.

Where this framing doesn’t fully apply

This analysis focuses on knowledge work — domains where context is judgment-heavy and tacit. Some enterprise AI applications don’t face these constraints:

Highly structured domains. Customer service routing, invoice processing, and code completion operate on explicit rules. Context is defined, not discovered (or that they’re easily discoverable in the case of code). These use cases are succeeding—and the 95% failure stat likely understates wins in narrower applications.

Organizations with unusual context hygiene. Some companies—often smaller or engineering-led—maintain documentation cultures where tacit knowledge already lives in written form. For them, the context gap is smaller.

The brute-force bet might work. I’m skeptical of human emulators as a scalable solution, but if compute costs drop faster than context infrastructure matures, emulation might outcompete elegant architecture. The market will decide.

The structural divide is real, but it’s not universal. The question is whether your enterprise AI ambitions fall into the 80% of use cases requiring context infrastructure—or the 20% that don’t.

What this means for founders

If you’re building AI for enterprises, the question isn’t “how do we make our AI smarter?” It’s “how do we build shared context infrastructure?” The companies that solve the context problem — not just the capability problem — will capture disproportionate value.

Different companies are making different bets:

xAI’s approach: Human emulators that navigate existing fragmented systems — expensive, fragile, emulates complexity rather than reducing it.

Memory layer startups like Mem0, Letta (spun out of Berkeley’s MemGPT research), and Zep: Persistent memory so AI retains context across sessions — solves the “paste the same context over and over” problem. None have pulled away yet; the race is still early.

Anthropic’s bottom-up strategy: Developers first via Claude Code, then “everyone else too intimidated by CLI” via Claude Cowork, then scale through existing cloud relationships (AWS Bedrock, Google Vertex). Let individuals prove value before formalizing enterprise deals.

There’s likely a better path than any of these: tools that help organizations articulate coherent context from their fragmented reality — not extract it from artifacts, but surface it from the people who actually hold it.

What this means for operators

If you’re an individual getting massive productivity gains from AI, don’t expect that experience to translate directly to your team. The bottleneck isn’t showing people how to prompt — it’s building the context layer that makes those prompts useful.

Start small: a shared repo of decisions, a living doc of constraints, anything that externalizes context from people’s heads. The goal isn’t to train a model on your company’s documents. It’s to help the humans who hold tacit knowledge articulate it in a form AI can use.

The MIT stat and the builder hype can coexist because they’re measuring different things. Individual AI productivity requires capability plus personal context. Enterprise AI productivity requires capability plus shared context — and that infrastructure barely exists.

The structural divide isn’t closing with better models. It closes with better context architecture.

It's interesting how clearly you articulate this structural divide. Your analysis of context scaling offers such a key insight, building on prevous work.