The Judgment Layer

When the feedback loop can't close

One of the most compelling narratives in AI right now is the self-improving agent/system: point it at an outcome, give it a feedback loop, let it compound. A viral article from a former Cruise engineer captures the vision cleanly, and his own case study reveals exactly where it stops. What you do with that boundary turns out to matter more than where it is.

“AI systems went from completing sentences to completing KPIs.” That’s how Nicholas Charriere, who spent four years building self-driving systems at Cruise before founding Mocha, describes the shift in “The Great Convergence.” Every AI company is converging on the same architecture, he argues: self-improving agents that do knowledge work. Give an agent an outcome and the tools it needs. Let it loop. “The companies that own more of that loop will improve faster and their progress will compound.”

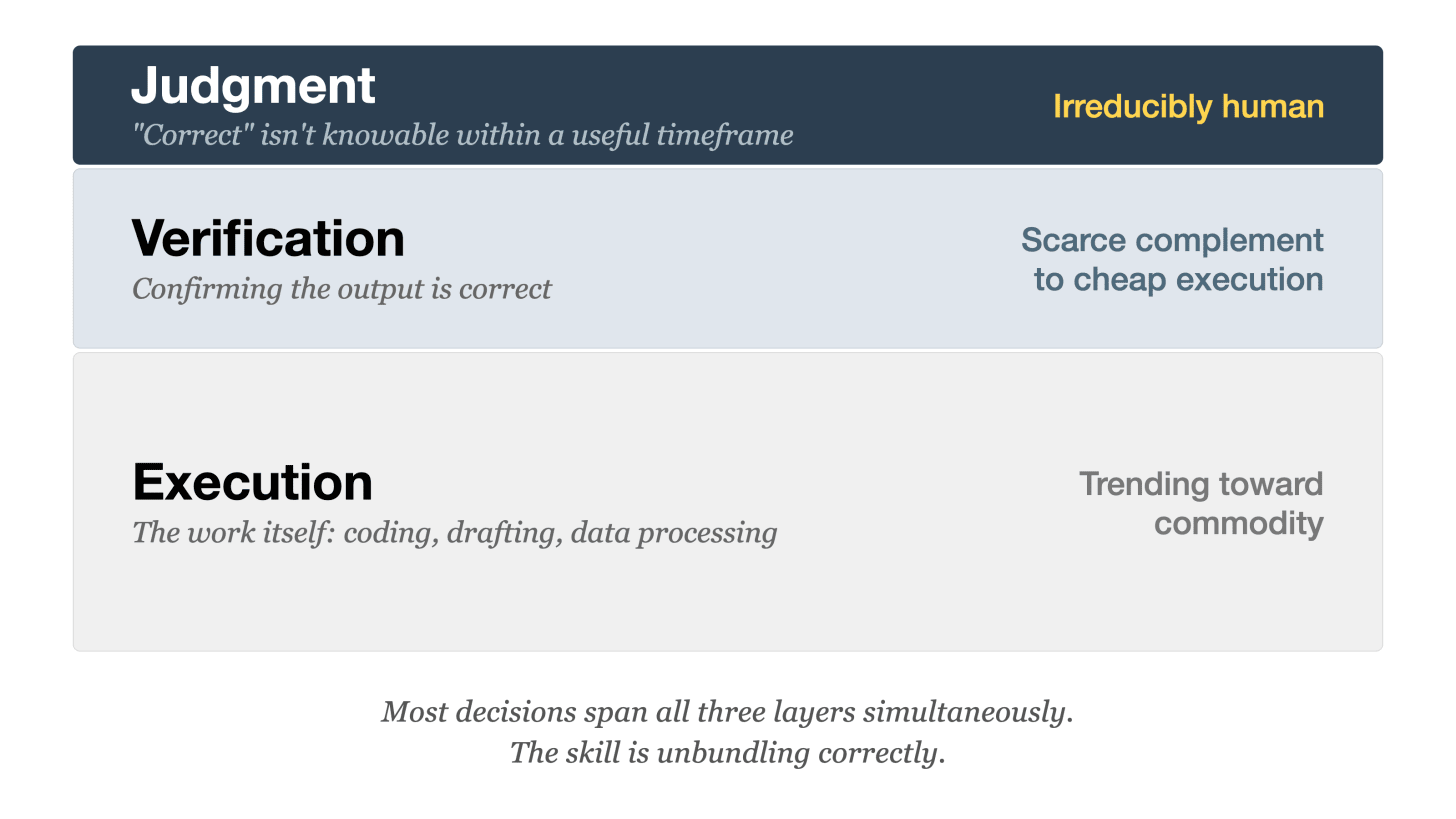

In Who Captures the Value?, I wrote about what happens when execution costs trend toward zero: value migrates to verification. But that assumed someone could eventually check whether the output was correct. What happens when the feedback loop takes over a decade to close, and every iteration is an irreversible bet?

Cruise’s continuously learning machine stopped at the last mile

At Cruise, the engineering north star was a Continuously Learning Machine: drive, collect data, retrain the model, deploy, on an ever-tightening loop. In four years, they compressed the deployment cycle from quarterly to weekly, real improvement by any measure.

But the machine stopped short of its own ambition. Charriere admits it plainly: “This was of course never quite achieved, there were always humans in the loop.” Humans steered at “the highest leverage areas: tough labeling, model tuning, deploy decision.”

The verifiable parts of the system converged as the thesis predicts. Data collection, model retraining, and deployment cadence all compressed toward routine. But the last mile stayed human: hard labeling edge cases, safety thresholds, whether to deploy on a given release. Simulation environments and synthetic data are active research areas in autonomous vehicles, but the feedback signal for “correct” on those decisions doesn’t resolve fast enough within real-world deployment timelines to train against.

The Judgment Layer

Most decisions that feel like pure judgment are actually bundles of verifiable sub-decisions plus a smaller layer of irreducible judgment. The hard skill is unbundling correctly. The Judgment Layer is the irreducible residue after you’ve automated everything that has a feedback loop fast enough to learn from.

Early-stage investing shows the pattern cleanly as I reflect on my experience over the past decade. Competitive landscape mapping, financial modeling, traction metrics: all verifiable. My partners and I can (and increasingly do) automate these leveraging AI. Founder-market fit, market timing, and conviction on a bet that takes over a decade to resolve: judgment.

Even “verifiable” sub-decisions are less clean than they look. Market sizing seems straightforward, but sizing the right market (especially for early-stage startups) is trickier than you’d expect. In 2014, NYU professor Aswath Damodaran valued Uber at $5.9 billion by sizing the market against taxis and black cars. Bill Gurley of Benchmark responded that the real market was all personal transportation, potentially 25x larger. Same company, same data available, completely different conclusion. The calculation is verifiable, but the choice of what to calculate is not.

The story around one of NextView’s earliest funds makes this concrete. My partners set the deliberate strategy of systematically evaluating performance across 30 portfolio companies and concentrated follow-on capital into the top four. Only one of these investments truly outperformed. But the judgment layer wasn’t which four to pick — it was the strategy of whether to double down at all. They understood power law dynamics well enough to know that in venture, a single winner can drive an entire fund’s returns. Without that concentration decision, the one company that did work wouldn’t have had enough capital behind it to matter. You can argue that the evaluation exercise was verifiable, but the conviction to concentrate was judgment.

The verifiable layer converges. The thin layer above it determines whether any of it is pointed in the right direction.

Where the self-improving loop breaks

Take the “Software Factory,” a three-person AI team formed inside StrongDM (an enterprise security/infra company) last July with an extreme founding mandate: no code written by humans, no code reviewed by humans. Developer Simon Willison wrote about it in February after visiting and seeing working demos. Their approach: let coding agents write and test software against “Digital Twin Universe” clones of the services it depends on (Okta, Slack, Google Docs), running scenarios at volumes far exceeding what’s possible against live services. It’s early (the team had only been running for three months when Willison visited) and expensive, at roughly $1,000 per engineer per day in token costs. But it’s one of the clearest working attempts at what Charriere describes.

This works because coding has something rare: a fast, clear definition of “correct.” Write a test spec, run the code against it, see if it passes. Define the KPI, build the loop, let it run.

But try applying “build once, runs forever” to “should we invest in this founder?” or “is this the right strategy?” The feedback loop can’t close because the signal for “correct” doesn’t arrive within a useful timeframe. The system can execute faster, but it can’t judge better when there’s nothing to judge against yet.

And even within the verifiable layer, individually correct sub-decisions can compound into a direction nobody intended. Each market-sizing model is defensible on its own term. Each competitive analysis checks out. But the accumulated weight of those analyses can drift the overall thesis without any single KPI flagging the shift. The signal is indirect: you notice the overall thesis has moved but can’t point to the specific decision that moved it. Only someone holding the full context catches that drift before it compounds wrongly.

The three layers — and why judgment sits on top

Who Captures the Value? mapped two layers of the value stack: execution (trending toward zero) and verification (the scarce complement). This post adds a third:

Most important decisions span all three layers simultaneously. A single investment decision involves execution (building the financial model), verification (checking the model against reality), and judgment (whether the market timing is right for this founder). The skill isn’t choosing which layer you’re in. It’s unbundling a decision into its components and treating each one appropriately.

The design skill: compound what you can, own what you can’t

The convergence thesis is right about the verifiable layers. The Software Factory experiment is already showing what this looks like in practice. Many companies are racing to experiment with building self-improving loops as the next frontier to unlock AI productivity.

The piece it leaves room for is the design skill — and it’s a practice, not a one-time architectural decision. What it looks like day to day is a question you keep asking: which parts of my work do I actually need to be the one deciding? The answer changes as the automated layer gets better. The question stays the same.

What’s a decision in your work that felt like pure judgment the first time you made it — but got more systematic the more you did it?

Thanks to my partner David Beisel for helping pressure-test the investing sections of this piece.