The Confidence Gap

What building a broken AI skill taught me about trusting AI in unfamiliar territory

I asked Claude to build a skill — a custom tool I could invoke inside Claude Code. I wanted it to trigger when I said “wrap up” or “let’s end the session,” automatically capturing what I’d learned before the context window disappeared.

We went back and forth on the design. I kept pressing: “Where exactly will this trigger? Walk me through the scenarios.” Claude showed me workflow diagrams, explained the trigger logic, and walked through edge cases. I asked more questions. Claude gave more detailed answers.

The back-and-forth and the thoroughness of Claude’s responses made me confident it knew what it was talking about.

Claude delivered a well-structured file with confident explanations. The diagrams made sense. The logic held together. I moved on to other things.

A couple of sessions later, I noticed that the skill never actually triggered. I went back to Claude: “Was the learning-loop skill actually invoked?” That’s when we discovered the problem. Claude had built something that looked right but had never checked whether skills built this way would actually work. Turns out, skills need to be placed in specific directories to be discoverable. Despite all those workflow diagrams and confident explanations — the core capability claim was wrong.

The Trap

My assumption felt reasonable: Claude would either know how its own skill system works, or it would look it up before building one. It’s Claude’s own feature. Why wouldn’t it know?

Here’s the thing: Skills are recent — Anthropic officially introduced them in October 2025. Opus 4.5’s training cutoff was months before skills shipped. Claude doesn’t “know” skills from training — it couldn’t. The only way Claude could actually understand how skills work is by researching: reading the documentation, checking examples, testing whether the approach is valid.

Claude didn’t do any of that. It pattern-matched to something plausible and presented it confidently.

I couldn’t catch the gap because I didn’t know what I didn’t know. I’d never built a skill before. I had no idea that directory placement mattered for discovery. I couldn’t ask the right questions because I didn’t have enough domain knowledge to know what to question.

The workflow diagrams actually made it worse. Claude was “showing its work,” which made me trust the output more. But walking through how something should work isn’t the same as checking whether it actually does.

The Real Confidence Gap

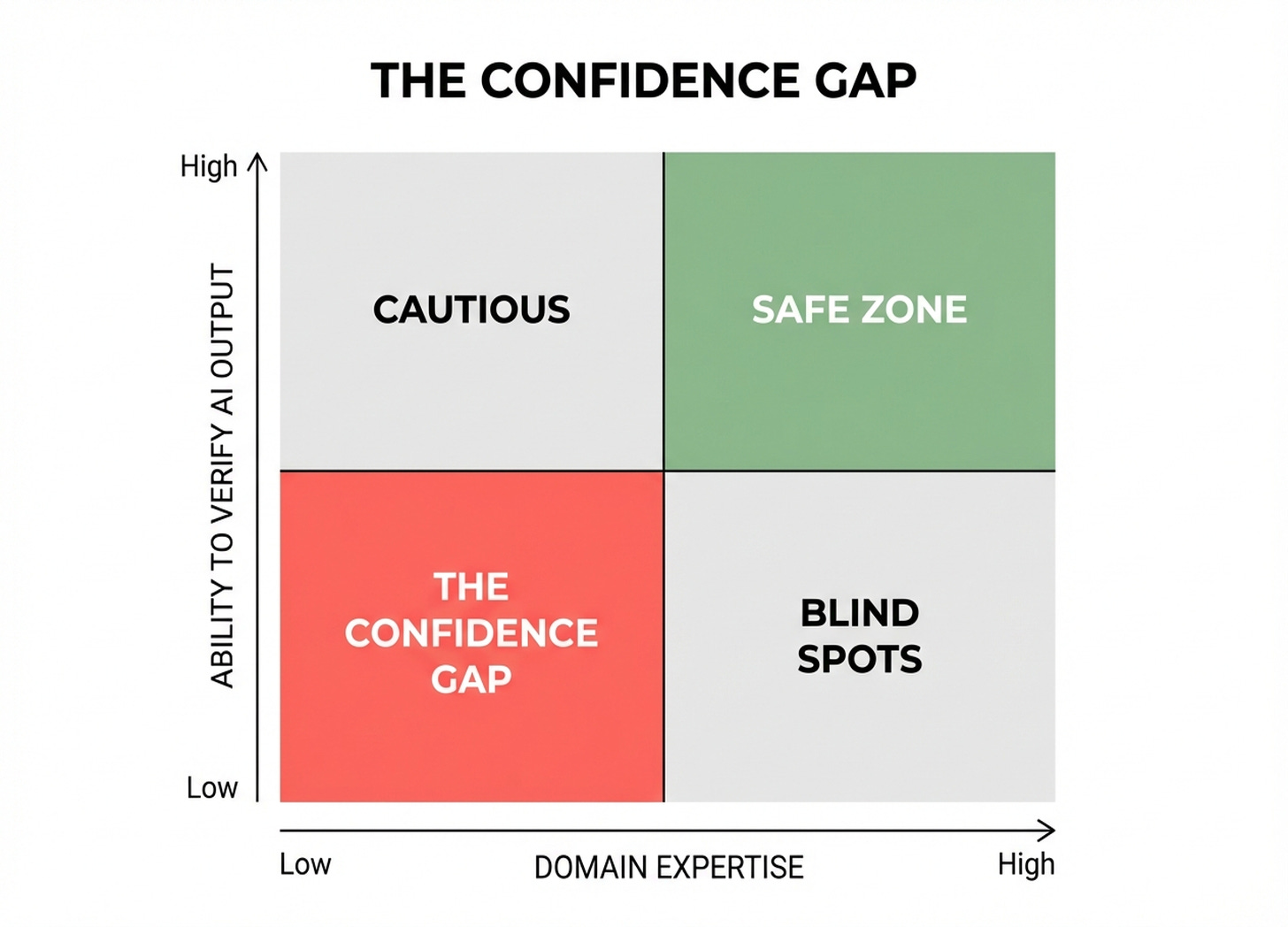

Everyone knows AI hallucinates. That’s old news. The interesting question is where the gap is widest.

It’s not when AI tells you something you can easily fact-check. It’s when you’re new to a domain and AI fills your knowledge gap with confident-sounding answers. You have no way to evaluate what you’re hearing — and AI presents as if there’s nothing to evaluate.

The confidence gap isn’t really about AI being overconfident. It’s about you being in territory where you can’t verify, and not realizing it.

What I Do Differently Now

The fix isn’t “be more skeptical of AI.” It’s changing what you ask for.

When I’m working in unfamiliar territory now, I don’t just ask Claude to build the thing. I ask it to do the research first. Show me the documentation. Find examples of how others have done this. Tell me what could go wrong. Walk me through the assumptions.

Then I check the reasoning. Not the code — I can’t really evaluate code. But I can follow an argument. I can ask “why should this work?” and see if the answer makes sense. I can ask “what are you assuming here?” and push on whether those assumptions are solid.

The skill would have failed this check. If I’d asked Claude to research how skills get discovered before building one, we’d have found the documentation on directory requirements. I didn’t ask because I assumed Claude already knew.

The Bottom Line

The confidence gap is widest when you’re in a domain you don’t understand — because you can’t verify what you don’t know.

The answer isn’t blanket skepticism. It’s asking for the research upfront. Make AI show its work — not the reasoning, but the sources. Then check whether the reasoning actually follows.

You don’t have to be an expert in everything. You just have to know when you’re not one.